When Erika Yeung arrived at Princeton, she knew she was drawn to the intersection of hardware and intelligence, the idea that physical systems like chips and sensors could not only compute, meaning process information, but also perceive the world, learn from data, and adapt over time. As a sophomore in the Electrical and Computer Engineering department, she took a bold step into that space through independent research with Professor Hossein Valavi. Her work focused on how neural networks, which are computer models inspired by the way the human brain processes information, can be redesigned to run efficiently on edge devices. These are small, local devices such as phones, sensors, or embedded systems that operate without relying on distant cloud servers.

At the heart of Erika’s work was quantization, a technique that reduces the numerical precision of neural network weights, which are the internal values that determine how the model makes decisions. Instead of using highly precise numbers, quantization simplifies them into smaller, more compact representations. This allows the model to take up less memory and run faster while still maintaining strong performance. This idea is central to fields like Edge AI and TinyML, which aim to move machine learning out of large data centers and into everyday devices, from wearable health monitors to autonomous systems operating far from the cloud. Running AI locally means the models must be not only accurate, but also lightweight, fast, and energy efficient. Quantization offers one of the most promising ways to make that possible.

Understanding How Neural Architectures Respond to Quantization

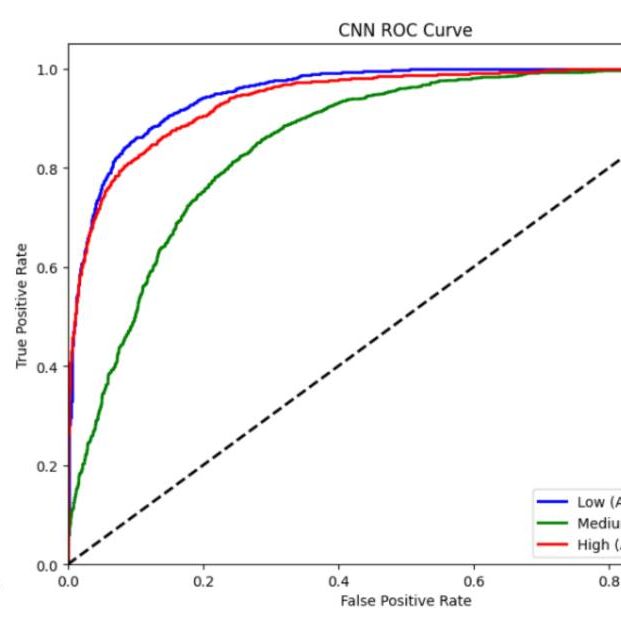

Erika’s project explored how uniform weight quantization affects different types of neural networks. Quantization reduces the precision of the numbers inside a model, and she tested it across bit widths from 1 to 16 bits, where fewer bits mean more compression but less detail. She applied this to three major architectures: fully connected multilayer perceptrons, convolutional neural networks, and transformer based language models. Each represents a different approach to learning. Fully connected networks rely on dense connections, convolutional neural networks capture spatial patterns like images, and transformers model relationships in sequences such as language using attention.

Using Google Colab, an online platform for running experiments, Erika evaluated how quantization impacted both regression tasks, which predict continuous values, and classification tasks, which assign labels. She measured performance using metrics like mean squared error, accuracy, and F1 score, while also analyzing compression and how sensitive different parts of each model were to reduced precision.

Her results revealed clear differences. Convolutional neural networks were the most robust, maintaining strong performance even at low precision. Fully connected networks degraded more quickly, showing a stronger dependence on precise values. Transformer models were the most sensitive, but also the most promising. With careful techniques like mixed precision, where different parts of the model use different levels of detail, they showed potential to handle quantization effectively.

“It was surprising to see how differently each architecture behaved,” Erika reflected. “It made me realize that efficiency is not just about compressing everything the same way. It is about understanding the structure of the model itself.”

Together, these findings highlight an important idea. Building efficient AI systems is not just about making models smaller, but about understanding how their structure shapes performance under real-world constraints.

Learning to Adapt, From Hardware Deployment to Simulation

Erika’s original vision was ambitious. She aimed to deploy a quantized language model on a Raspberry Pi and directly measure power consumption, bringing theory into physical reality. However, as the semester unfolded, hardware limitations, tooling challenges, and steep learning curves introduced unexpected obstacles.

Rather than pushing forward with incomplete or unreliable results, Erika demonstrated the kind of intellectual maturity and judgment that define strong researchers. Together with Professor Valavi, she made the deliberate decision to pivot toward simulation-based experiments. By conducting controlled quantization studies in Google Colab, she was able to produce more rigorous, reproducible results while building a deeper understanding of the underlying principles.

Professor Valavi’s mentorship played an indispensable role in guiding this transition. Known for his deep expertise in machine learning systems, he fostered an environment where curiosity and resilience could thrive. His guidance encouraged Erika not only to solve technical problems but also to think critically about the broader research process.

“I am incredibly grateful to have worked with Professor Valavi,” Erika said. “His mentorship made a huge difference. He encouraged me to think critically, to ask better questions, and to trust the research process.”

Growth Beyond the Technical Work

Independent research challenged Erika in ways she had not anticipated. It required her to grapple with unfamiliar tools, absorb new theoretical concepts quickly, and confront the open-ended nature of scientific inquiry. Unlike structured coursework, there were no predefined answers—only questions, hypotheses, and the persistence to explore them.

Through this process, Erika discovered the creative dimension of engineering. “This experience taught me that research is not about getting everything right immediately,” she said. “It is about learning continuously, refining your approach, and staying curious even when things do not work the first time.”

Her experience also deepened her appreciation for how Electrical and Computer Engineering serves as a bridge between theory and reality. Concepts from signal processing, computer architecture, and machine learning converged into a cohesive framework for designing intelligent systems. What once existed as isolated topics in lecture halls became interconnected tools for innovation.

The Power of Mentorship and Independent Exploration

Reflecting on the experience, Erika speaks with immense gratitude for Professor Valavi’s mentorship because he created a space where Erika could grow not only as a student but also as an emerging researcher.

Independent work offered Erika something uniquely valuable: the freedom to pursue questions driven by genuine curiosity. It allowed her to engage deeply with a problem, develop technical independence, and experience firsthand the challenges and rewards of research.

“It’s one of the most meaningful experiences you can have as an undergraduate,” Erika reflected. “You learn so much—not just about engineering, but about how to think, how to adapt, and how to keep going when things don’t work the first time.”

Looking Forward

Erika’s work represents an early step into a field that is rapidly shaping the future of computing. As AI continues to move beyond centralized infrastructure and into embedded systems, the importance of efficient neural network design will only grow.

Erika strongly encourages other students, especially first-years and sophomores, to consider getting involved in research early. Independent work provides a unique opportunity to explore personal interests, develop technical skills, and build meaningful relationships with mentors. Her research has not only contributed valuable insights into quantization and neural architecture sensitivity, but has also laid a foundation for future exploration. With Professor Valavi’s continued mentorship and her own growing expertise, Erika is poised to continue pushing the boundaries of efficient AI systems.

“If you are even slightly curious about research, you should try it,” she said. “It is one of the best ways to learn what engineering really is.”

– Aishah Shahid, Engineering Correspondent